Agentic investments have been significant and increasingly strategic. Eighteen months ago, AI agents were largely the domain of pilot programs and proof of concepts. Today, they're becoming embedded in customer support flows, employee onboarding, internal knowledge bases, and countless other workflows across organizations of every size.

CFOs are no longer observing AI spend from a distance. Today, they're owning the budget and demanding accountability for it. Boards want to know if AI is being deployed and how it’s creating durable business value, which makes the following pattern worth examining carefully.

93% of enterprise AI budget goes to the technology itself, with infrastructure, models, and deployment consume the vast majority of spend. The remaining 7% goes to the behavior change, adoption, and measurement needed to realize the return on that investment.

This isn't a failure of ambition, it simply reflects where the tooling ecosystem was when organizations began deploying agents at scale. The tools for building agents matured quickly. The tools for understanding how users experience them are still catching up, and the gap between those two things is where a significant amount of AI value quietly disappears.

Agents can be technically flawless, but still fail

Today, the dominant approach to monitoring AI agents comes from the developer observability stack: traces, spans, latency measurements, token consumption, hallucination detection, error rates. Tools like LangSmith, Arize, and Braintrust were built by engineers for engineers, and they do their job well. They tell you whether your agent is running and help you answer questions, like:

- Did the agent respond?

- How long did it take?

- What did it cost per token?

- Did it hallucinate?

- Where did the API fail?

These are necessary questions, but they’re not a sufficient alone.

An agent may look perfect, upon first glance—fast responses, low error rates, clean traces—and still be failing the people it was built to serve. It can answer questions quickly while consistently missing what users actually needed. It can have excellent uptime while sitting largely unused because users found it easier to open a support ticket.

Product people, on the otherhand, are asking different kinds of observability questions:

- Did users get what they needed?

- Where are they getting frustrated?

- Which use cases are actually emerging?

- Is adoption growing or stalling?

- Is this worth scaling?

Both sets of questions matter. But only one tells you whether your AI investment is delivering business value, and for most organizations right now, that column is going largely unmeasured.

The hybrid SaaS and agentic experience nobody is tracking

There's structural reason why measuring agents in isolation gives an incomplete picture is because users don't experience agents in isolation.

In most enterprise environments, an agent sits inside a broader software experience. A user navigates a dashboard, hits a friction point, and turns to an AI agent. What they do afterward (whether they complete the task, open a support ticket, or abandon entirely), tells you far more about whether the agent succeeded than the agent's response itself. The full session is the unit of measurement that matters.

When that data lives in separate systems, you can't see that users who struggle with a particular workflow are more likely to abandon the agent interaction that follows. You can't see that your agent is fielding questions a better-designed UI would have answered on its own. The transition from click-based software to agentic software is happening across the entire user experience, and you need to be able to see it that way.

Pendo captures that full view: what users do before, during, and after agent interactions, in a single dataset, alongside all of your existing product analytics.

It's the only way to understand what's actually happening as the user experience changes. And it's what Pendo Agent Analytics was built to answer.

What Agent Analytics actually measures

When Pendo launched Agent Analytics in June 2025, the premise was straightforward: measuring an AI agent's impact should look a lot like measuring any other significant product capability.

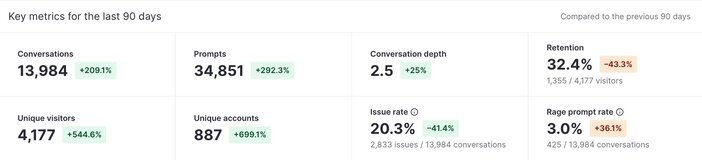

Adoption. Engagement depth. Retention. Task completion. And the friction signals that predict abandonment before it becomes churn. A year in, that framing has held and gotten sharper around a specific set of metrics that turn out to be the leading indicators of agentic health. Product people need to measure agent performance metrics, like:

- Issue Rate: The share of conversations where the agent fails to resolve the user's need. Repeated guidance, timeouts, off-script responses. The leading indicator of friction.

- Rage Prompt Rate: Users rephrasing repeatedly, using all-caps, or sending rapid failed prompts. The conversational equivalent of a rage click.

- Retention Rate: The share of users returning to the agent over time. The clearest signal of durable value versus novelty.

What makes these key metrics, in addition to the others captured in Agent Analytics, meaningful is that they're tracked alongside the rest of the user's experience in the product. A rising Issue Rate in isolation is a signal. A rising Issue Rate that correlates with a steep decline in overall product adoption or stickiness is actionable intelligence.

The same principles that deliver great products apply to agents too

Pendo was built on two beliefs that have held since the beginning.

The first: to genuinely understand how users experience software, you need more than one type of signal. Quantitative data tells you what users do. Qualitative feedback tells you what they think. Sentiment tells you how they feel. Visual replays show you exactly what they saw. And conversational data—the fifth and newest dimension—captures what users say to your agent and how it impacts their experience. Together, they give you the full picture.

The second belief is what makes the first one useful: analytics and guidance belong in the same tool. Understanding where users struggle is only valuable if you can act on it. Insight without the ability to respond is just expensive awareness.

Both beliefs apply directly to AI agents. Agent Analytics extends all of these dimensions to conversational AI: quantitative metrics like Issue Rate, Rage Prompt Rate, and Retention surface patterns at scale; Session Replay lets you watch the exact moment a user's patience ran out; sentiment surfaces how users feel about what they're prompting; and qualitative signals reveal what users want the agent to do that it currently can't. When a friction point surfaces across any of those dimensions, Pendo Guides let product teams act on it immediately, without an engineering sprint.

When those five signals and the ability to respond to them live in one platform, the question shifts from "how is the agent doing?" to "how is the agent changing the way people use my product?"

What you deployed vs. what users actually want

Every agent is built with a purpose: handle onboarding questions, assist with expense reporting, surface relevant documentation. Those are the intended use cases: the workflows a team deliberately designed and invested in. But users interact with agents on their own terms, asking questions the agent wasn't designed for and attempting workflows no one anticipated.

Pendo surfaces these emergent use cases automatically through semantic clustering—grouping prompts by theme without manual tagging—so you can see which behaviors are actually driving engagement, and which intended use cases are quietly being ignored.

Pushpay, a payments provider, learned that users were abandoning conversations after just three or four prompts with Agent Analytics. Seeing exactly where the drop-off was happening let the team redesign that specific experience based on real user requests rather than assumptions. They now use Agent Analytics for regular monitoring of their AI search tool, and have found users leveraging it decreased their time to access critical information from 1-2 minutes to 10 seconds.

Connecting agent behavior to business outcomes

The measurement question that matters most to a CFO may not be adoption explicitly, but rather return. And the way to prove return on an AI agent investment is to connect agent interactions to downstream business results.

Pendo does this through conversion funnels and path comparisons that treat the agent as a step in the user's workflow rather than a separate system. Does a user who interacts with the booking assistant have a higher rate of completing a reservation? Does the employee using the AI-powered expense tool close their report faster than the one following the traditional click-based path? Is the agentic way actually better than what it replaced?

For internal-facing deployments, the ROI case is often measured in labor terms: how many routine queries is the agent handling, and what does that free up? For customer-facing deployments, it shows up in retention and satisfaction data. In both cases, the proof requires connecting the agent interaction to what happens next, which is only possible when the data lives in the same place.

Answering the AI ROI questions your board is already asking

Somewhere in your organization right now, someone is preparing a slide about AI ROI. It might be for a board meeting, a budget review, or a leadership offsite. And the uncomfortable truth is that for most organizations, that slide is built on deployment metrics—how many agents are live, how many prompts are being sent, how much was spent—because that's the only data available.

Deployment metrics don't answer the question boards are actually asking. They want to know whether the investment is working, whether users are adopting tools and seeing productivity gains, and if this a foundation to build on (or an expensive experiment to wind down). Those answers require a different kind of data, and right now, most organizations don't have it.

That's the gap Agent Analytics closes. It adds the layer your engineering stack was never designed to provide: the user's experience. What they needed, whether they got it, where they gave up, and what that means for whether you expand, hold, or pull back. See how it works in this video.

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2F4d3fd498d2084371881ec92a295d1d5b?format=webp)

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2F9ff22b3acf8b4ea9a53fe5510823b5ad?format=webp)

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2F38e671e34e79473f863a92e1a6574112?format=webp)