Here's something that’s been bothering me.

With AI, teams are shipping faster than they ever have. But the systems they used to understand what they'd shipped haven't changed at all. Someone still has to manually tag features, maintain dashboards, and check those dashboards when they remember to.

That "someone" can't keep up anymore. And when they fall behind, the data people use to make decisions quietly drifts away from the product that's actually running in production. Routes get renamed. Features get refactored. New workflows ship. The instrumentation doesn't follow. We started calling this analytics drift, and once you see it in a codebase, you can't unsee it.

To help builders solve for this, we built and launched Novus, the product agent that connects directly to your codebase to automatically instrument, analyze, and improve your product with every release. It delivers product intelligence for engineers, agents, and fast-moving product teams so they always have a clear view of product performance, no matter how quickly they ship.

Why builders need Novus now

There's a conversation happening in every team building with AI right now: how do you give your agents and models the context they need to do useful work? Better prompts help. Better skills help. But the thing that actually moves the needle is ground truth product understanding: an accurate, current picture of how your product works and how people actually use it.

As our VP of product design, I've been building with Claude Code, and the difference between useful output and generic output comes down to exactly this. When the AI understands real behavior and feedback (who the users are, where they get stuck, what actually matters) it makes better decisions faster. Without that context, it builds stuff that works. It's just blind to how to grow your business: driving adoption, reducing friction, improving retention.

For most teams, that ground truth understanding is either missing, stale, or locked inside someone's head. It decays every time the product ships something new. That's the problem that needs solving, not just for humans making decisions, but for every agent and tool operating alongside your codebase.

So when we started designing Novus, those constraints became the brief:

- It should understand the product through the code, with no manual tagging required.

- It should stay current as the codebase changes. Instrumentation that rots defeats the whole purpose.

- It should surface what matters without waiting for someone to ask. Continuous observation, not periodic check-ins.

- It should connect insight to something reviewable and actionable: pull requests and in-product changes, not more reports.

- The team should always decide what ships. Novus proposes, humans approve.

How Novus works

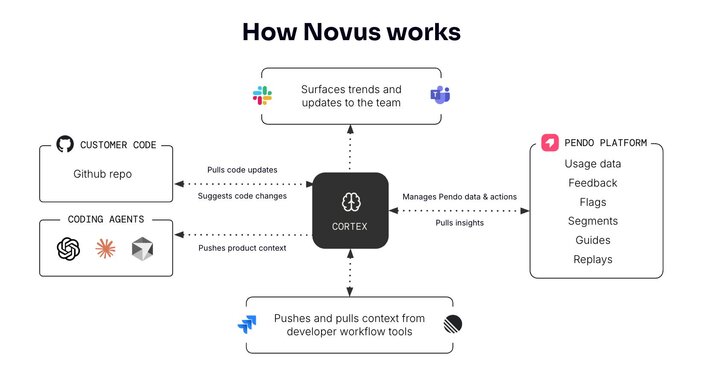

Everything in Novus runs on top of Cortex, the product context graph that sits at the core. Cortex is a living model of your product: what it is, how it's structured, how people actually use it, and what that usage means for your business. It connects code, behavior, and outcomes in a single, continuously updated picture, without anyone having to configure or maintain it by hand.

This is also what connects Novus to Pendo's broader product intelligence layer. Pendo has spent more than a decade building infrastructure to understand product behavior at scale — analytics, session replay, feedback, predictions. Cortex is what keeps all of that grounded in reality as software changes.

When your codebase evolves, Cortex evolves with it, so the intelligence it generates is always working from an accurate picture of what's actually running in production. Novus is how product intelligence works in the AI era: where software ships daily, instrumentation can't be manual, and the feedback loop has to run by itself.

The best way to understand how it works is to follow a single release from start to finish.

Step 1: Connect your repo — Novus builds the map

To get started, you connect a GitHub repo and you’re ready to go. Novus reads the code and builds what we call Memory: a living structural map of your product. This includes routes, UI elements, user and account identification patterns, client and server-side track event candidates, and workflows.

If your product includes AI agents, Novus detects and instruments those too. It configures your replays, so sensitive information isn’t being collected. It sets up a theme for in-app guides that matches your application. The onboarding flow asks about your role and goals, which powers how Novus prioritizes and frames everything downstream.

Within minutes, Novus already knows things about your product that would take a PM weeks to document manually. And unlike documentation, Memory stays accurate. When code changes, it updates automatically.

Step 2: You ship something — Novus reviews the PR

A developer pushes a change and opens a pull request. Before it merges, Novus runs two checks in parallel.

The first is a diff analysis: what changed, what that means for product understanding, what instrumentation needs updating. You’ll probably never think about manually instrumenting for analytics again.

The second is a UX review. This is the one that's gotten the strongest reaction from the teams that used it. Most PR review tools check code quality or system architecture. Novus checks whether the experience you're shipping will actually work for your users. It surfaces issues at the line level: wrong color semantics, inconsistent interaction patterns, dead click zones, accessibility gaps. Where behavioral data exists to back it up, it shows you that too.

Here's what that looks like in practice. In one recent PR, Novus noticed that when an error occurred in the application, the code logged it but never surfaced anything to the user. No message, feedback, or indication that anything had gone wrong. A classic UX blind spot that's easy to miss in a diff and genuinely frustrating to encounter as a user.

Another example that stuck with me more: a PR introduced a new authentication flow but was missing a redirect at the end, meaning users who completed the flow successfully landed nowhere. The UX review flagged the missing redirect before the PR merged, and backed it up with behavioral data showing hundreds of visitors had already hit this exact dead end in the weeks prior on a similar flow. A silent failure that would have been invisible until someone filed a support ticket.

The engineer reviews suggestions exactly as they would any PR comment. Novus doesn't block the PR, it informs the decision. Nothing reaches production without a human sign-off.

Step 3: Novus builds the launch

This is where Novus goes from observing to acting.

When a meaningful change merges (a new feature, a significant workflow update) Novus doesn't just track it. It sets up the launch. That means a feature flag with compound rollout segments (internal team first, then active users, then a percentage sample), in-product guides attached to the new experience, and all of it connected to the pages and features Novus already understands from Memory.

You review the plan and approve it. Novus does the work.

Then you set a goal in plain language. This is something like "increase adoption of the new flow by 20% in 30 days." Novus maps it to the artifacts it already understands, establishes a baseline, and from that point on, every Signal it surfaces is framed against that goal.

Step 4: Signal surfaces, investigation runs, PR opens

While Memory keeps product understanding current, Signals is the other half of Cortex — continuous observation of real user behavior across the product map Memory maintains. Signals are categorized into three types: issues, opportunities, and insights.

When something goes wrong with your launch (adoption isn't moving, friction is appearing in the new flow, users are dropping off where they shouldn't) Novus surfaces it as a narrative explanation backed by data, not just a metric that moved.

You click into the Signal and trigger an investigation. Novus cross-correlates the behavioral data, pulls in session replays, root-causes the issue, and produces a fix plan at the code level.

For instance, an investigation into dead click frustration on a dashboard surfaced three distinct causes: placeholder cards with no click handlers, a chat input with dead zones in the padding, and content sections rendered as non-interactive. Novus identified all three, root-caused each one, and proposed specific code changes. The engineer reviewed the plan, approved it, and Novus opened the PR.

By the time you're reviewing the PR, Novus has already connected the code change to the behavior to the fix. That's the loop — and it doesn't need anyone to pedal it.

We’re learning and building in public

Novus is in closed beta. The system works, and the teams we've been building with have given us strong signal that we’re heading in the right direction. But there are things we're still actively working through.

- Instrumentation accuracy across architectures. Auto-instrumentation is strong across the codebases we've tested. We haven't seen every framework, every pattern, every edge case. Some repos will need correction on the first pass. We're learning fast and every design partner helps us get better at it.

- What "right" looks like for the review workflow. We've intentionally kept humans in the loop on every action Novus proposes. That means nothing merges automatically, nothing reaches production without approval. We think that's the right call. Our industry is wrestling with the question of how much control is truly needed, and how much is just people being hesitant. We're here to figure it out together.

- The scope of what we instrument. Novus handles human-generated flows well today, and this is also an area where Pendo's existing infrastructure gives us a meaningful head start. Through Agent Analytics, Pendo is already tracking 2.5 million prompts each week, which means Cortex isn't starting from zero when it comes to understanding how AI-driven behavior shows up in production. Products that are substantially agentic, where behavior emerges dynamically from agent decisions, are where we're investing next. We're looking for design partners building in that space.

Three things that will never change: Novus doesn't merge code without your approval. It doesn't train on your proprietary code. It doesn't cut you out of the decision-making process.

We'll share what we find as we go. If you're the kind of team that wants to shape something while it's still being built, that's exactly who we're looking for.

Join the Novus beta program

We're working with a small group of design partners, product engineers, and technical product owners at companies with fast-moving codebases who want to help us find the edges and shape what continuous product intelligence looks like in practice.

The interesting part is what Novus surfaces before you've told it anything. Come find out.

Request early access and be part of the closed beta.

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2Fcc6ff09f68874f949c2463d2e368a182?format=webp)

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2Fcaee906ddf4e44bd8aa490fd886fe687?format=webp)

![[object Object]](https://cdn.builder.io/api/v1/image/assets%2F6a96e08774184353b3aa88032e406411%2Fec897ba633c442b69f5cc5fe8b089700?format=webp)